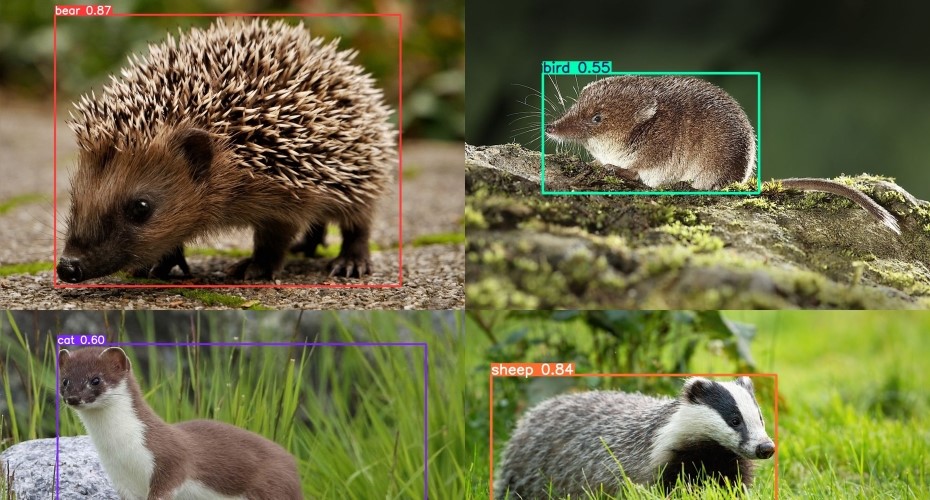

Wildlife imaging shows that AI models aren’t as smart as we think

Using AI to identify wildlife reveals a potential “transferability crisis”, researchers say.

Marketing for AI imaging systems often suggests that models can easily tackle novel scenarios across ecosystems and settings, much in the same way as human observers.

But in a new article, two University of Exeter researchers argue that this is based on a “flawed assumption”.

They use examples from species identification and diagnostic imaging to illustrate this.

While AI models work reliably within the environments in which they were trained—the researchers say that this rarely carries over to new locations, making generalisability difficult to predict.

“The take-home message is that despite being considered as the ‘gold standard’, performance benchmarks (tests used to assess AI) do not reliably indicate the true ability of AI models,” said Dr Thomas O’Shea-Wheller, from the Environment and Sustainability Institute at Exeter’s Penryn Campus in Cornwall.

“We see lots of claims purporting to compare the ability of the latest models to humans across very broad scenarios.”

“However, these are derived from performance testing on datasets that do not always carry over to real-world tasks.”

“A model trained to identify cats using stock images will perform well when tested against other stock images of cats, but this is not going to translate into effective cat detection in the wild.

“The danger is that such benchmark metrics—often composed of arbitrary image categories—are being used to overstate model performance and generalisability.”

Katie Murray, from the Centre for Ecology and Conservation, added: “In the case of wildlife identification, you can end up with something that’s not working well, but seems very confident in its conclusions.”

“Put simply, AI struggles with things it hasn’t seen before, but it won’t necessarily express that to the user.”

Dr O’Shea-Wheller notes that the issue is not so much with the technology itself, but rather with how it is used.

“AI can be incredibly powerful, but context is key – models need to be evaluated in their real-world use cases, and if they are not, it can lead to serious issues down the line.”

“In ecology, this creates challenges for species surveillance and conservation, while in contexts such as medicine, the consequences can be even more problematic.”

“Perhaps the most dangerous aspect of this, is that when a model fails, it is often not detected until extensive damage is done.”

The researchers call for caution when interpreting performance metrics—and increased adoption of tools that allow models to be rapidly tested within real-world applications.

Regarding the broader issue of benchmarks, they argue that these should not be used to estimate generalised model performance.

“As things currently stand, the only reliable way to evaluate how well an AI model will work is to actually test it within your specific use case.”

The article, published in the journal PLOS Biology, is entitled: “Deep learning in biology faces a transferability crisis.”